In the past, I have discussed strange sentences like garden path sentences and Moses illusions. These sentences, while strange or inaccurate in some way, are ultimately grammatical. I find sentences like these particularly fascinating because they push the limits of what our brains will tolerate while reading. There is another type of sentence I encountered recently that seems innocuous at first, but as I researched more I was blown away by how widely studied this particular sentence construction is. For an example, take a look at this tweet from Dan Rather from back in 2019 and see if you can notice what is going on.

This sentence makes zero sense. If you read it quickly though and don’t overthink it, you might just convince yourself that it does! Look at the replies for instance. The overwhelming majority of the replies have completely missed the fact that this sentence is poorly formed and have assumed that the main point of this sentence is that the presidents English is not very good. This tweet is attempting to form a comparative between two groups of people in different dimensions of comparison, making it ungrammatical.

To visualize this, let’s break it down into two halves. “I think there are more candidates on stage who speak Spanish more fluently…” – Okay, so here he is setting up a comparison where we are looking at a quantity of people. “…than our president speaks English.” – but he finishes the statement off with a statement regarding how well the president at the time could speak English. He is trying to compare a number of Spanish speaking people to the fluency of another’s English. Even as I write this, I am having a hard time describing exactly what the sentence is “trying” to say, but I think we can all agree that it makes no sense whatsoever.

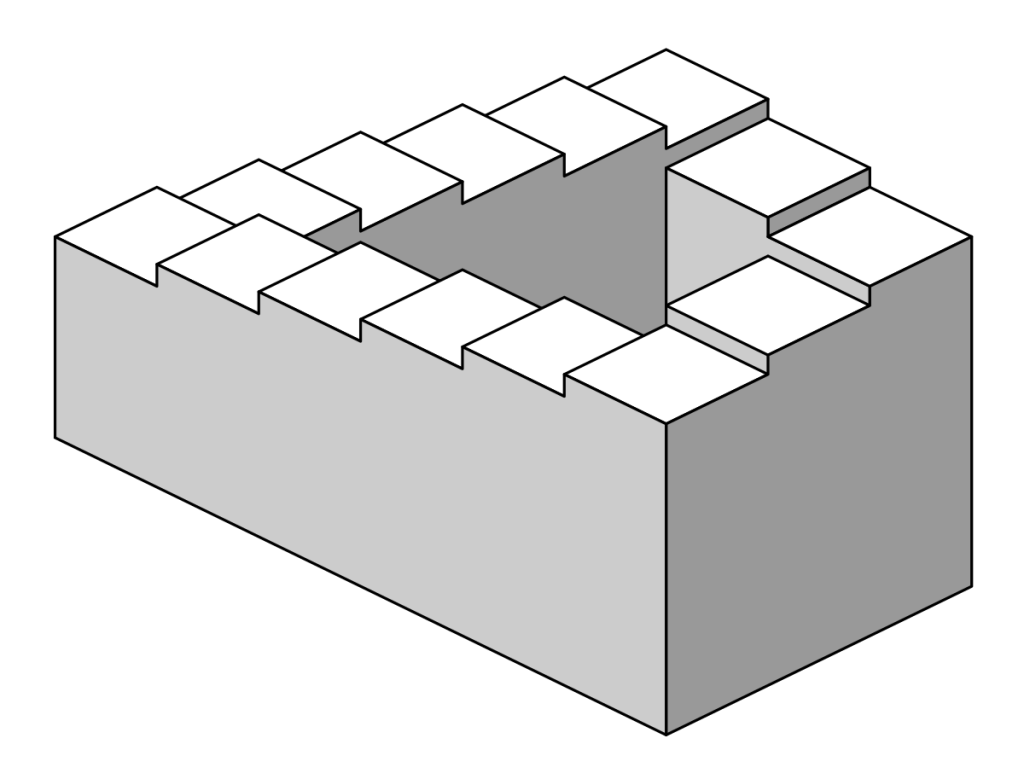

What exactly are sentences like this called? These sentences are called comparative illusions (the sciency name) or Escher sentences (the more fun name). The name Escher sentence comes from the famous artist M.C. Escher, who’s famous Penrose stairs illusion involves a staircase that looks normal on the surface, but ultimately go no where and cannot function like a normal set of stairs. Honestly, if there were an award for naming things, this would absolutely win because I cannot think of a better fitting description than that. These sentences seem completely fine, until they’re not and then you are just left to stand back and wonder what the heck is going on. The stereotypical example used widely for this phenomenon is a little easier to spot:

- More people have been to France than I have.

Again, we see a sentence that is trying to compare two separate ideas in a single sentence. In a sentence like this one, we are presented with a set of individuals in the first clause (more people), but when we get to the second clause (I have), we discover that there is no such set of individuals with which to draw a comparison.

To be clear, this is not an issue of plurality in the second clause. The sentence “More people have been to France than we have” is equally awful.

The most striking thing about these sentences is that people will not report any sort of weirdness on first glance. It’s not until you take a longer look and try to determine exactly what is being said that you start to notice what is going on.

So, what exactly is going on then? Well, as is the case with a lot of linguistic weirdness, there is no way for us to be able to know for sure. Some researchers have tried to argue that, like I mentioned above, the sentence is trying to use two templates for comparison which are fine in isolation. It is only when you combine them in the same sentence that things start to go awry.

Think of it like this. Here I will present you with two true and grammatical sentences:

- John is too tired to drive his car safely.

- John has driven for as many hours as Tim has.

These sentences both being true does not allow you to blend them together into a third sentence like so:

- John is too tired as Tim has.

Now this is just a bad sentence and not an Escher sentence in the slightest. Let’s try to apply this same sort of logic to an Escher sentence though:

- More people have gone to France than I could believe.

- John and Mary have gone to France more than I have.

- More people have gone to France than I have.

It could also be the case that our brains are noticing that there is deleted material at the end of this construction that we expect to be there because we see sentences like that all the time. Take this sentence for example:

- Sally ate some pizza and Amanda did too.

This sentence is completely fine and our brains don’t struggle with it at all because we can infer that the “did too” in this case means that Amanda also ate some pizza. So with an Escher sentence, when we encounter the end of it “… than I have.” our brain might just be saying “oh I can fill in the blanks here” and because we have some working examples to reference, we think it all makes sense and we call it close enough.

All of these theories have been hard to prove in the past and there hasn’t really been a concrete solution as to why we are seemingly unperturbed by these horrible sentences. I’m curious to know what other people think of these so if you have any theories about them, or if you want to try to convince me that they are ultimately fine, let me know down below.

Thank you for reading folks! I hope this was informative and interesting to you. Be sure to come back next week for more interesting linguistic insights. If you have any topics that you want to know more about, please reach out and I will do my best to write about them. In the meantime, remember to speak up and give linguists more data.